Publications

Projects

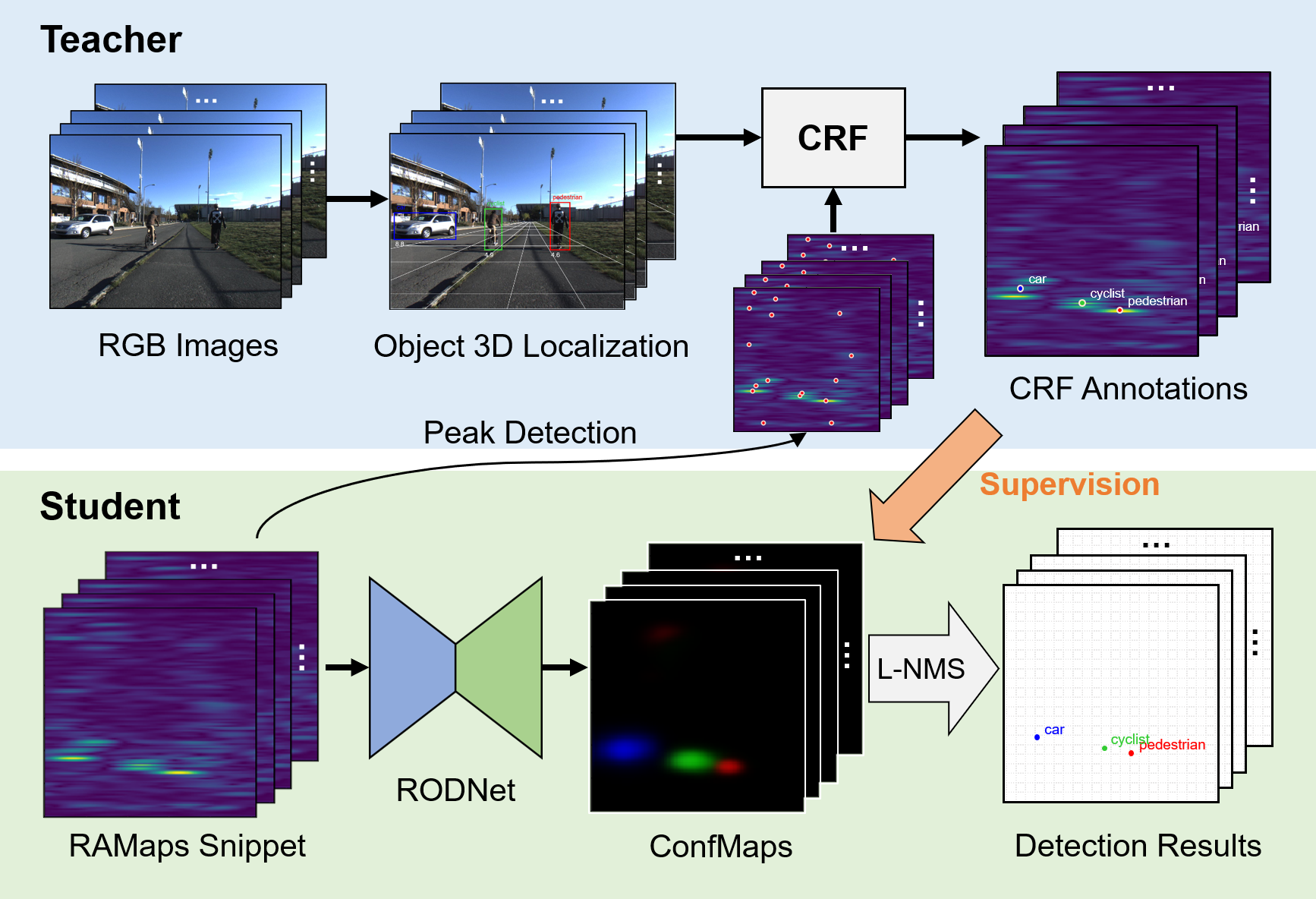

RODNet: Radar Object Detection Using Cross-Modal Supervision

We propose a deep radar object detection network (RODNet), to effectively detect objects purely from the carefully processed radar frequency data in the format of range-azimuth frequency heatmaps (RAMaps). Instead of using burdensome human-labeled ground truth, we train the RODNet using the annotations generated automatically by a novel 3D localization method using a camera-radar fusion (CRF) strategy. After intensive experiments, our RODNet shows favorable object detection performance without the presence of the camera.

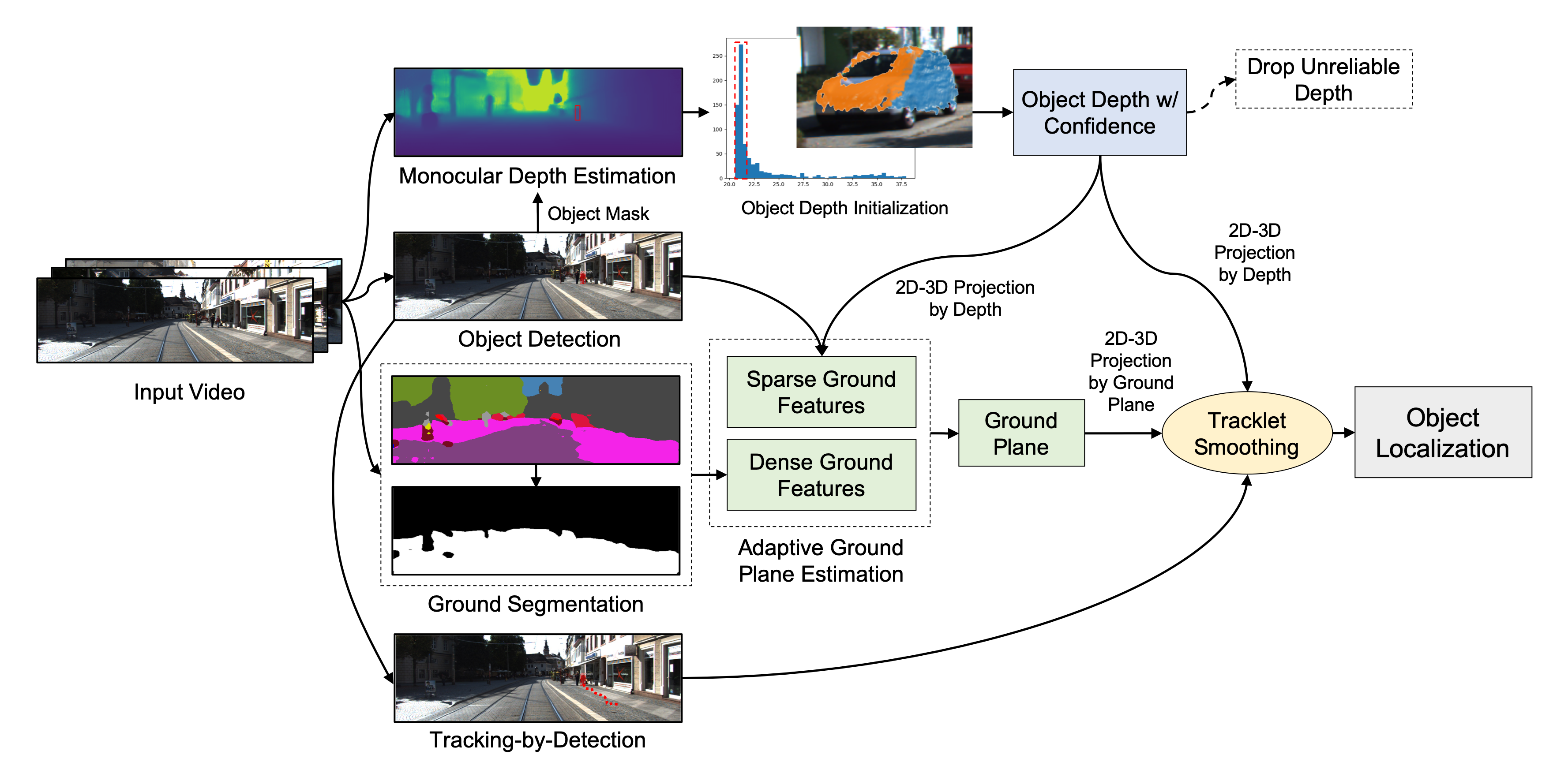

Monocular Visual Object 3D Localization in Road Scenes (ACMMM'19 Long Oral)

Yizhou Wang, Yen-Ting Huang, Jeng-Neng Hwang.

3D localization of objects in road scenes is important for autonomous driving and advanced driver-assistance systems (ADAS). However, with common monocular camera setups, 3D information is difficult to obtain. In this paper, we propose a novel and robust method for 3D localization of monocular visual objects in road scenes by joint integration of depth estimation, ground plane estimation, and multi-object tracking techniques.

GIF Super-Resolution

Yizhou Wang, Liangliang Cao.

We proposed a novel super-resolution approach for GIFs, which uses two high-resolution frames (the first and last frames) as well as the low-resolution data to generate a high-resolution GIF. To validate this approach, we collect a new super-resolution dataset for GIFs. The experiments on this dataset show that the performance of our algorithm significantly outperforms the popular video super-resolution baselines while achieving at least 80 times speedup on CPU.

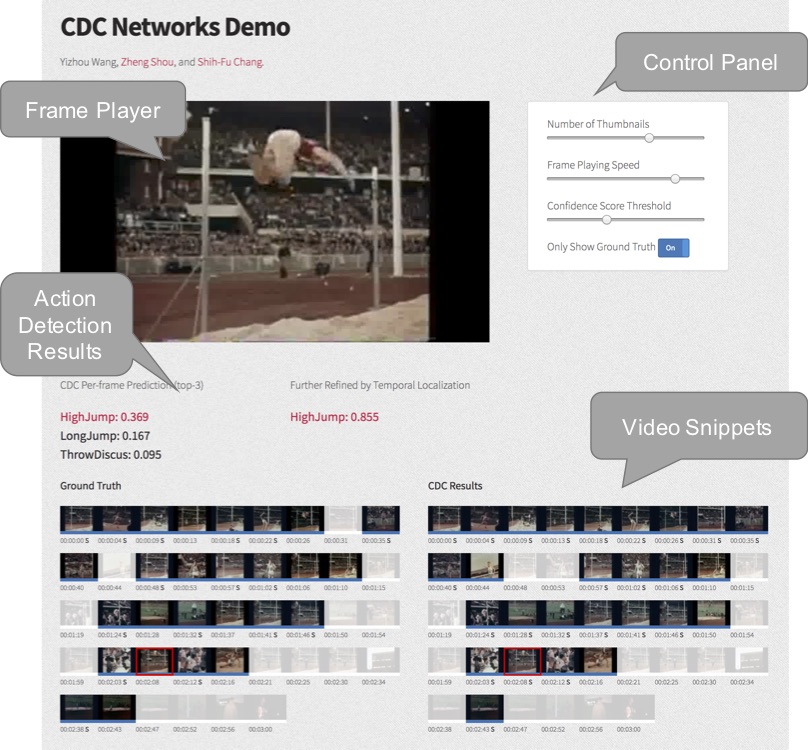

Demo: Temporal Action Localization (TAL) in Videos

Yizhou Wang, Zheng Shou, Shih-Fu Chang.

We proposed a series of new web-based methods of demonstration for TAL problem, including snippet-level and frame-level demonstration. On the demo website, users can either upload video or select video from THUMOS'14 to processing TAL algorithms. TAL algorithm available: Segment-CNN and CDC Networks. The demonstration methods we proposed can give users TAL results clearly and effciently.